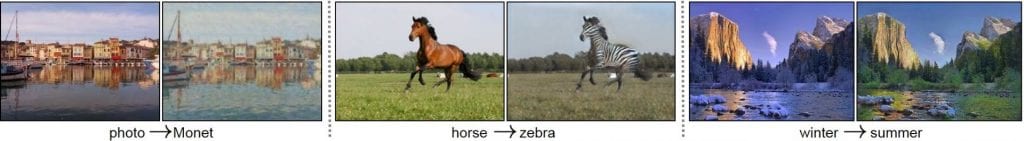

Most of the progress over the last several years in machine learning approaches has been based on an entirely data-driven, discriminative approach that is ambivalent to how the data was produced. Recently, however, interest has resurged on generative approaches that construct a model for how the data was produced either deterministically or probabilistically. A good generative model enables more robust machine learning using fewer labelled training examples plus powerful signal and image processing. Indeed, much of the recent excitement in deep learning involves so-called generative adversarial networks (GANs) that can transform photographs into Monet paintings, horses into zebras, and your face image into Lady Gaga's.

CycleGAN examples

CycleGAN examples

This course will review the past, present, and future of generative models, from classical hidden Markov models to GANs. Specific topics to be discussed include: Gaussian mixture models, graphical models (Bayesian networks), signal manifolds, autoencoders, GANs, deep decoders with applications in signal and image transformation, synthesis, and inverse problems.

This is a “reading course,” meaning that students will read and present classic and recent papers from the technical literature to the rest of the class in a lively debate format. Discussions will aim at identifying common themes and important trends in the field. Students will also get hands on experience with optimization problems and deep learning software through a group project.

- Location: 1075 Duncan Hall

- Time: Friday 2pm

- Instructor: Richard Baraniuk

2028 Duncan Hall

Office Hours: By appointment - Prerequisites: Required: Linear algebra, introduction to probability and statistics, familiarity with a programming language such as Python or MATLAB. Desired: Knowledge of signal processing, machine learning, convex optimization, and deep learning

- Course Website: Piazza Course Management Site (It is mandatory that you use this site; all official announcements will be made there)